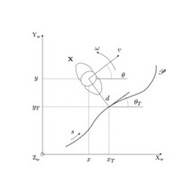

This paper presents the design of a decentralized connectivity-maintenance algorithm for the teleoperation of a team of multiple UAVs, together with an extensive human subject evaluation in virtual and real environments. The proposed connectivity-maintenance algorithm enhances earlier works by improving their applicability, safety, effectiveness, and ease of use, by including: (i) an airflow-avoidance behavior that avoids stack downwash phenomena in rotor-based aerial robots; (ii) a consensus-based action for enabling fast displacements with minimal topology changes by having all follower robots moving at the leader's velocity; (iii) an automatic decrease of the minimum degree of connectivity, enabling an intuitive and dynamic expansion/compression of the formation; and (iv) an automatic detection and resolution of deadlock configurations, i.e., when the robot leader cannot move due to counterbalancing connectivity- and external-related inputs. We also devised and evaluated different interfaces for teleoperating the team as well as different ways of receiving information about the connectivity force acting on the leader. Results of two human subject experiments show that the proposed algorithm is effective in various situations. Moreover, using haptic feedback to provide information about the team connectivity outperforms providing both no feedback at all and sensory substitution via visual feedback.

@article{AgPaRo-tase20,

Author = {Aggravi, M. and Pacchierotti, C. and {Robuffo Giordano}, P.},

Title = {Connectivity-Maintenance Teleoperation of a UAV Fleet with Wearable Haptic Feedback,

Journal = {{IEEE Transactions on Automation Science and Engineering}},

Year = {2020}

Pages = {0},

Doi = {10.1109/TASE.2020.3000060}

}